Terraform and Ansible are the right tools for this model. But they only work at enterprise scale when each tool stays in its lane and follows a strict separation of concerns. And that separation only holds up when orchestration is decoupled using a blueprint that matches official HashiCorp guidance and real-world Ansible enterprise patterns.

Overview of the Article

This article involves:

- Using Packer with Ansible to preconfigure hardened AMIs. This approach shifts heavy configuration to the image build phase, reducing runtime provisioning from minutes to seconds and ensuring consistent, reproducible environments during scale-out events.

- Structuring the CI/CD pipeline into distinct Terraform and Ansible stages: Plan, Provision, Inventory Sync, and Configure. This separation enables asynchronous orchestration and artifact-based handoffs, aligning the workflow with GitOps and policy-as-code best practices.

- Modernizing Terraform state and secret management by replacing DynamoDB locks with Terraform’s native S3 lockfile mechanism for simpler concurrency control. Secrets are retrieved dynamically from Vault at runtime, supporting zero-trust principles and preventing sensitive data from being written to state files or disks.

The Principle of Clear Separation

A reliable automation pipeline begins with a strict, well-documented separation of concerns. Terraform and Ansible must manage distinct layers of the stack, never overlapping.

| Tool | Core Responsibility | Configuration Scope | Enterprise Rationale |

| Terraform | Infrastructure Provisioning | Cloud and Network Layer. VPC. Subnets. EC2 Instances. Load Balancers. IAM Roles. Security Groups. | Stateful: Defines the existence and dependencies of resources across the entire cloud graph |

| Ansible | Configuration Management | Operating System Layer. Package Install, Users, Filesystem, Application Deployment, OS Firewalls, Service Management. | Agentless: Enforces desired state on running hosts without requiring a persistent, heavy client. |

The Immutable Principle – Decoupling Build from Run

Immutable infrastructure separates image build-time configuration from instance runtime configuration.

| Component | Responsibility | Phase | Configuration Type |

| Packer + Ansible | Build & Configuration: Creates base image with common software, agents, OS hardening | Image Build-Time | Static (OS, base software, hardening) |

| Terraform | Provisioning & Deployment: Launches instances using the new Packer-built image | Infrastructure Provisioning | Infrastructure (Network, Load Balancer, Scaling Group) |

| Cloud-Init / User Data | Finalization: Injects runtime secrets/parameters | Instance Run-Time | Dynamic (Secrets, Tags) |

Scenario:

In a mutable environment, configuring a new instance can take 15 minutes. With immutable infrastructure, most configuration is pre-baked into the image. That cuts the setup time to just 3 minutes.

Source: HashiCorp Developer, “Build a golden image pipeline with HCP Packer”

Packer + Ansible – Building the Golden Image

Packer automates the creation of temporary instances and captures them as reusable images. Ansible provisions the OS and middleware inside these instances.

A. Ansible Provisioner Choices

| Provisioner | Mode | Pros | Cons |

| ansible | Run from local CI/CD runner over SSH | Fast; no need to install Ansible on build instance | Requires open SSH access |

| ansible-local | Installed on temporary instance | Ideal for air-gapped or secure environments | Slower; must install Ansible for every build |

B. Packer HCL2 Example

| packer { required_plugins { amazon = { source = “github.com/hashicorp/amazon” } ansible = { source = “github.com/hashicorp/ansible” } } } source “amazon-ebs” “webserver” { region = “us-east-1” instance_type = “t3.medium” source_ami = “ami-0abcdef1234567890” ssh_username = “ubuntu” } build { sources = [“source.amazon-ebs.webserver”] provisioner “shell” { inline = [“sudo apt-get update && sudo apt-get install -y python3 python3-pip”] } provisioner “ansible” { playbook_file = “./ansible/golden_image.yml” extra_arguments = [“–scp-extra-args=’-T'”, “-e”, “packer_build_name={{.BuildName}}”] user = “ubuntu” ansible_env_vars = [“ANSIBLE_CONFIG=./ansible/ansible.cfg”, “ANSIBLE_ROLES_PATH=./ansible/roles”] } provisioner “shell” { inline = [“sudo rm -rf /tmp/* /var/log/*”, “history -c”] execute_command = “echo ‘{{ .Vars }}’ | {{ .Vars }} bash -c ‘{{ .Command }}'” } } |

Terraform Deployment – Referencing the Golden Image

Terraform handles infrastructure provisioning. However, it leaves OS configuration to the Packer-built image it references.

| data “aws_ami” “latest_app_image” { most_recent = true owners = [“self”] filter { name = “name” values = [“golden-webserver-v*”] } filter { name = “tag:Environment” values = [“Production”] } } resource “aws_launch_template” “web_template” { image_id = data.aws_ami.latest_app_image.id user_data = base64encode(templatefile(“${path.module}/cloud-init.yaml”, { DB_HOST = aws_rds_instance.app_db.address REGION = var.aws_region })) } |

Pipeline Architecture:

- Changes to Ansible roles trigger the Image Build Pipeline, producing a new image artifact.

- The Infrastructure Deployment Pipeline then deploys that image via Terraform. This refreshes Auto Scaling Group instances automatically.

Decoupled Workflows for Seamless CI/CD Orchestration

Using purpose-built solutions rather than embedding configuration logic inside Terraform. This is achieved with a decoupled workflow orchestrated via GitLab CI, GitHub Actions, or Jenkins.

The Four-Stage Enterprise Pipeline

| Stage | Tool | Function | Key Task |

| 1. Plan | Terraform | Infrastructure Validation | Runs terraform plan -out=tfplan and executes Policy-as-Code checks (e.g., OPA) |

| 2. Provision | Terraform | Infrastructure Creation | Runs terraform apply tfplan. Creates instances and outputs connectivity data. |

| 3. Inventory Sync | Custom Script / Dynamic Plugin | State Handoff | Reads Terraform Outputs i.e, IPs, Hostnames. Generates a temporary Ansible Inventory file. |

| 4. Configure | Ansible | Host Configuration & Deployment | Runs ansible-playbook -i dynamic_inventory.ini site.yml |

Dynamic Inventory Generation (Scripted Handoff)

| # dynamic_inventory_generator.py (CI/CD Runner) import subprocess import json import os def create_ansible_inventory(output_name=“webserver_ips”, inventory_file=“dynamic_inventory.ini”): try: result = subprocess.run([‘terraform’, ‘output’, ‘-json’, output_name], capture_output=True, text=True, check=True, cwd=‘/path/to/tf/root’) ips = json.loads(result.stdout) except subprocess.CalledProcessError as e: raise Exception(f”Failed to read Terraform output: {e.stderr}“) inventory_content = “[webservers]\n” for ip in ips: inventory_content += f”{ip} ansible_user=ubuntu ansible_ssh_private_key_file={os.environ[‘SSH_KEY_PATH’]}\n” with open(inventory_file, “w”) as f: f.write(inventory_content) print(f”Inventory created: {inventory_file}“) if __name__ == “__main__”: create_ansible_inventory() |

Advanced Ansible Configuration

For last-mile deployment and host configuration in the “Configure” stage, specific techniques ensure speed and reliability:

A. Performance Tuning

| # ansible.cfg [ssh_connection] pipelining = True |

- Forks: Increase parallelism (50-100, depending on control machine).

- Mitogen Strategy: Speed up SSH-heavy playbooks.

| ansible-playbook –i inventory.yml site.yml –strategy mitogen_linear |

B. Idempotency & Fault Tolerance

| – name: Deploy application transactionally block: – ansible.builtin.service: name=app state=stopped – ansible.builtin.copy: src=new_app.war dest=/var/lib/app/ – ansible.builtin.service: name=app state=started rescue: – ansible.builtin.debug: msg=“Deployment failed” – ansible.builtin.service: name=app state=started |

Ansible uses block/rescue to ensure idempotent deployments, conditionally triggering handlers and rolling back on failure.

Enterprise Multi-Environment Governance and Standardization

Securing Your Terraform State (Zero Trust and Locking Evolution)

The Terraform state file is a sensitive asset. The setup must reflect the latest official best practices regarding concurrency and security.

Remote State and the S3 Locking Transition (Official Update)

While the pairing of S3 for state storage and DynamoDB for locking has been the “battle-tested” standard, HashiCorp has evolved the recommended practice:

- Traditional (Legacy): Using a dedicated DynamoDB table (dynamodb_table) ensured atomic consistency for concurrent write operations across distributed teams.

- Modern (Recommended – TF v1.11+): AWS S3 backend now supports native S3 state locking via use_lockfile = true. This leverages S3’s conditional write functionality to create a .tflock file, removing the need for an external DynamoDB resource, reducing cost and simplifying bootstrapping.

Code Example: Simplified S3 Backend Configuration

| terraform { required_version = “>= 1.11.0” backend “s3” { bucket = “maxapex-prod-tf-state” key = “services/app-cluster/terraform.tfstate” region = “us-east-1” encrypt = true # Mandatory: Use SSE-S3 encryption use_lockfile = true # Enables modern S3 locking # Remove dynamodb_table attribute entirely if using native locking } } |

Migrating from DynamoDB? Ensure IAM roles cover s3:PutObject, s3:GetObject, and s3:DeleteObject permissions on the .tflock and state files.

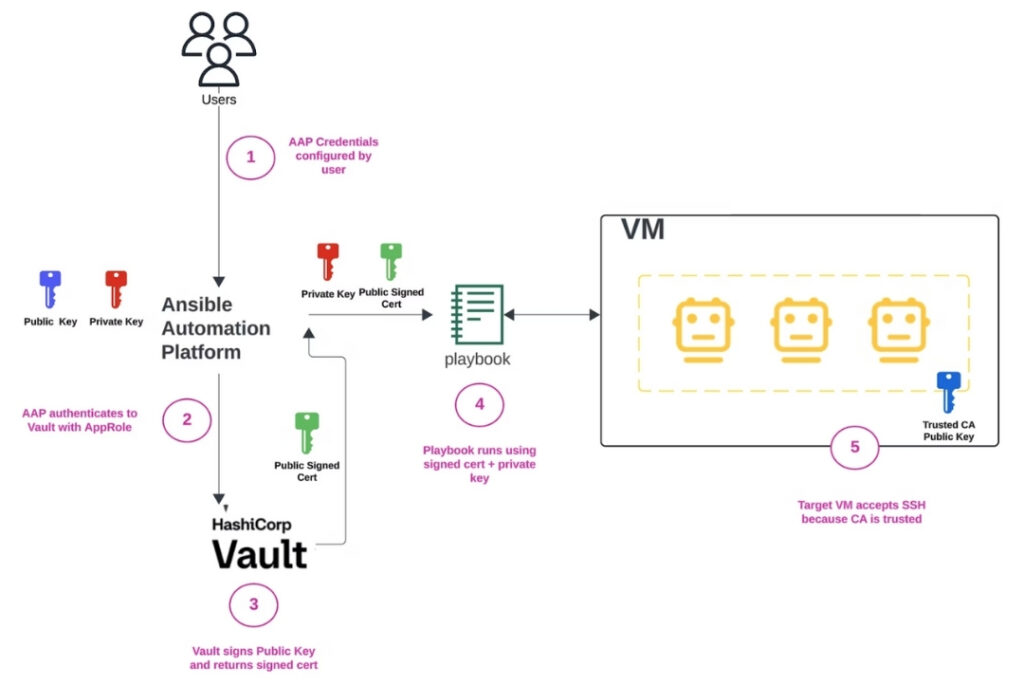

Zero Trust Secrets Retrieval at Runtime

Terraform provisions the infrastructure, but Ansible needs to access runtime secrets. For instance, database credentials and SSH keys. The secret should never be written to disk or state files.

Scenario: Terraform provisions an EC2 instance and a secret in HashiCorp Vault. The Ansible playbook retrieves it at runtime.

Ansible + Vault Integration:

| # Ansible task retrieving a secret from HashiCorp Vault – name: Retrieve DB credentials from Vault ansible.builtin.set_fact: db_password: “{{ lookup(‘community.hashi_vault.hashi_vault’, ‘secret=prod/webapp/db:password’) }}” – name: Deploy application with secret community.docker.docker_container: # ... other configuration env: DB_PASS: “{{ db_password }}” # Injects secret as ephemeral ENV var |

Source: HashiCorp Developer, “Vault SSH Certificate Authority (CA) Workflow”

Required Authentication Context:

The Ansible Control Node must authenticate to Vault. Supported enterprise methods:

- AppRole: Dynamically generated role_id and secret_id (best for CI/CD runners.)

- AWS IAM: Using runner’s AWS IAM Role/Profile identity.

- Kubernetes Service Account (KSA): Using the KSA token for runners inside a K8s cluster.

VCS Structure and Policy-as-Code (PaC)

VCS Structure

Separation is maintained by organizing the repository into /modules for reusable logic. And /environments (/dev, /stage, /prod) with environment-specific variables.

PaC

Before terraform apply, Policy-as-Code (e.g., OPA/Rego or HashiCorp Sentinel) runs in the CI/CD pipeline to enforce governance. Such as:

- Enforcing encryption on all storage resources.

- Blocking public subnets in production.

- Validating cost thresholds.

Conclusion

Without a clear architecture, mixing Terraform and Ansible leads to drift, blind spots, and failures at scale. With the MaxAPEX decoupled model:

- Terraform manages the cloud graph

- Ansible handles runtime config

- Logic stays modular and governed

- Secrets stay ephemeral

- Images stay hardened and replaceable

This delivers true automation; repeatable, reliable, enterprise-grade operations.

ABOUT MAXAPEX

At MaxAPEX, we help you modernize and secure your cloud infrastructure. Using DevOps, Terraform, and cloud lifecycle management, you automate tasks, prevent errors, and keep deployments consistent everywhere.

Sources:

Build a golden image pipeline with HCP Packer

Use HCP Packer and HCP Terraform to improve base image pipelines

HashiCorp Developer, “Backend Type: s3 | Terraform”

Goodbye DynamoDB — Terraform S3 Backend Now Supports Native Locking